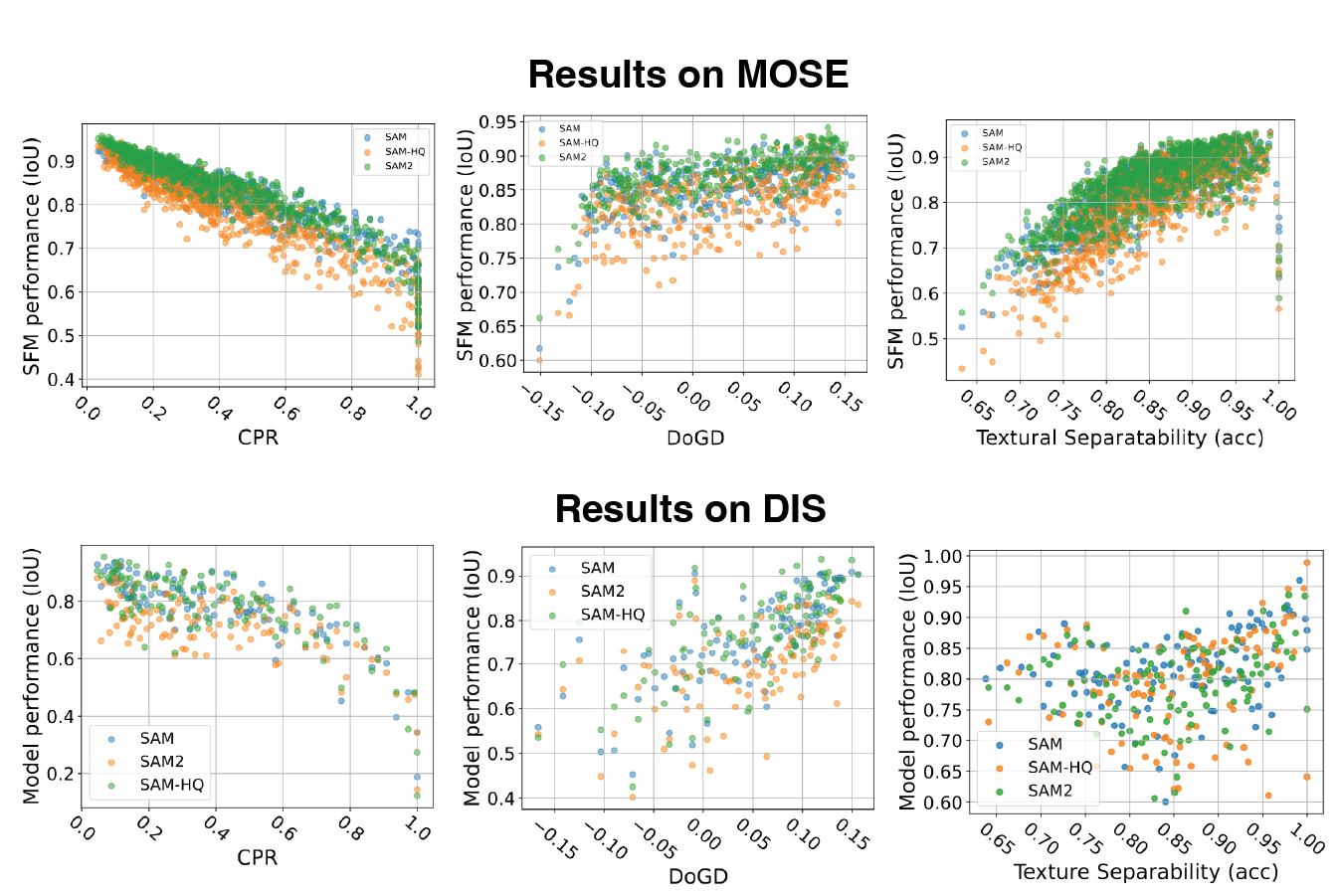

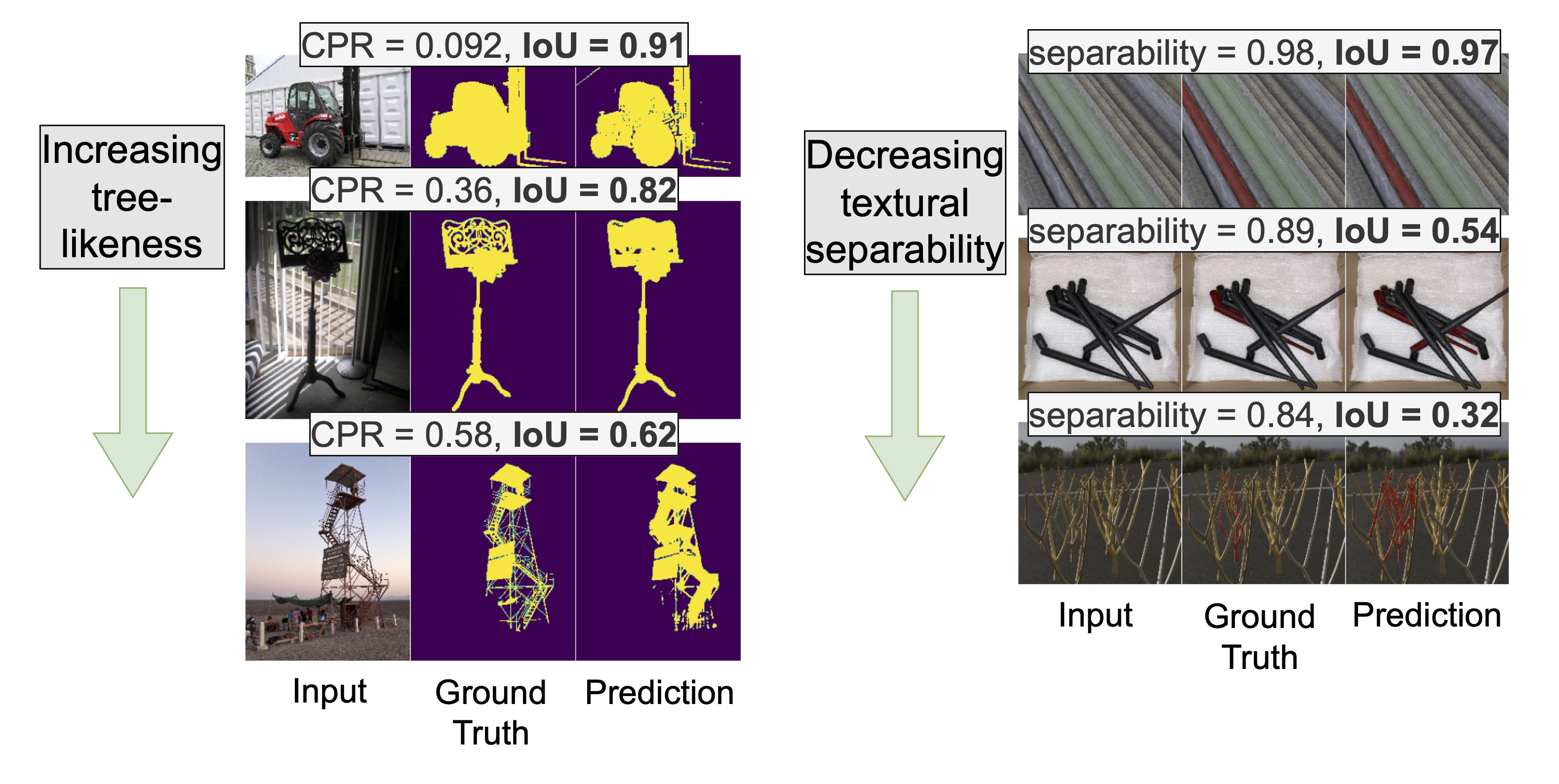

Segmentation foundation models (SFMs) struggle with tree-like and low-contrast objects. We introduce interpretable metrics that quantify these object properties and show that SFM performance (IoU) noticeably correlates with them — providing the first quantitative framework for modeling these failure modes.